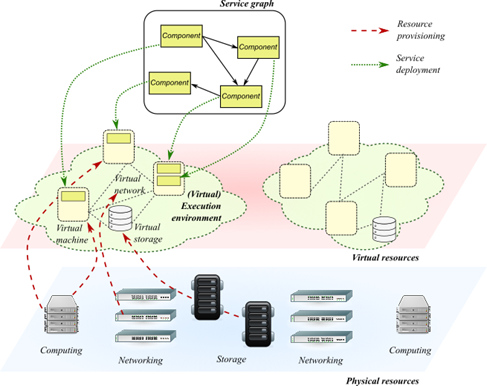

The growing adoption of virtualization technologies and micro-services architectures (e.g., for cloud computing and network function virtualization) is re-shaping the traditional security paradigms for running software appliances. As a matter of fact, such services are usually designed as “graphs” or “chains” of simple applications (often called micro-services), connected by virtual (network) links, and deployed over a virtualised set of computing, storage, and networking resources, indicated as the “execution environment”. Although de-coupling software from the underlying infrastructure brings immediate benefits in terms of elasticity, portability, automation and resiliency, the intermediate hypervisor tier also raises new security concerns about the mutual trustworthiness between those two layers and the potential threats in the virtualization substrate.

Unlike the legacy approach, where many appliances are usually delivered with a predefined, verified, and static stack of software on well-known hardware, emerging software development paradigms are increasingly making use of third-party services available in public marketplaces, which are then deployed in public virtualization infrastructures. Virtualization is responsible to provide isolation between computing, networking, and storage resources of different tenants, but cloud users substantially rely on the third-party operation. Similar to well-established practice in physical installations, security appliances like Intrusion Prevention/Detection Systems, Firewalls, and Antivirus can be applied within each sandbox, by integrating them in service graph design, in order to inspect software, network traffic, user and application behaviour. This approach usually brings a large overhead on service graph execution, the need for more resources (CPU, memory), and a tight integration between service designers and security staff (which is not always simple to achieve in current development processes). Additionally, these components are built in the classic fashion, protecting the system only against outside attacks, while the most of the cloud attacks are now done through the compromising of the network functions themselves using tenant networks running in parallel with the compromised system.

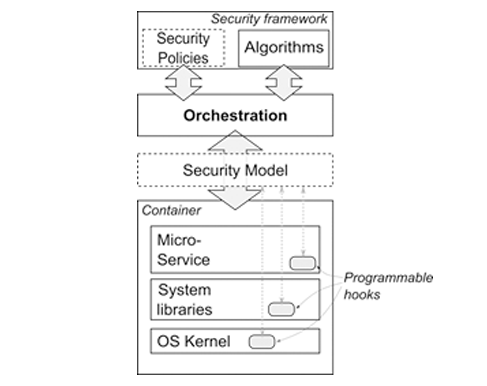

The general approach of ASTRID is tight integration of security aspects in the orchestration process.

Starting from the descriptive and applicative semantics of a Security Model, orchestration is expected to deploy and manage the life-time of the service, by adapting the awareness layer of individual components and the whole service graph according to specific needs of detection algorithms. This means that monitoring operations, types and frequency of event reporting, level of logging is selectively and locally adjusted to retrieve the exact amount of knowledge, without overwhelming the whole system with unnecessary information. The purpose is to get more details for critical or vulnerable components when anomalies are detected that may indicate an attack, or when a warning is issued by cyber-security teams about new threats and vulnerabilities just discovered.

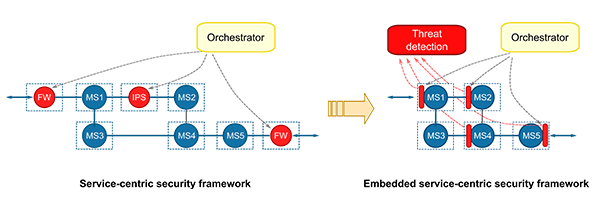

The distinctive aspect of the ASTRID approach is that no explicit additional instances of security appliances are added and deployed in the execution environment, which is the typical approach of some recent proposals in this field. The vision of ASTRID is a transition embedded service-centric security frameworks, which decouples monitoring/inspection capability in the virtual functions and their containers from algorithms for detection of threats, anomalies, vulnerabilities, attacks. A Security Model represents the abstraction between the two layers. It uses specific semantics to describe what security functions are implemented by programmable security hooks that are present in the virtualisation container (in the OS kernel, in system libraries, and in the virtual function code), e.g., logging, event reporting, filtering, deep packet inspection, system call interception. Through the Security Model, Orchestration knows what kinds of operations can be carried out on each component, collect data and measurements, and feed the detection logic that analyse and correlated information at graph level to detect threats.

The ASTRID concept brings far more dynamicity, scalability, robustness, and self-adaptability than any other approach proposed so far:

- Programming of the data plane requires far less time than deploying, removing, or migrating security appliances within the service graph

- Reduced overhead on graph execution and attack surface, since most of the detection logic typically integrated in security instances is shifted outside the service graph.

- The intermediate orchestration layer enables also reaction and mitigation actions, hence shortening the time to respond to attacks. For instance, it can dynamically update or replace an application that has been found vulnerable to a specific threat with another one, which may perform the same job but it not vulnerable to that threat. Or, when an attack is detected and pinpointed, it can reinforce protection by instantiating traffic filtering rules in front of the victim service, which discards most of the unwanted traffic. Or, when an application has been compromised, it can either disconnect it (so that the attacker cannot go anywhere) or keep it isolated and use it as honeypot, but passing “fake” traffic to cheat the attacker and collect useful information about his behaviour.

- Pulling security appliances out of service graph design makes them independent from the chaining and orchestration models, hence common solution may be developed for different domains (e.g., cloud and NFV).

- The targeted set of actions provide the means to dynamically adapt the system based on the type of attack using the elasticity properties of the cloud infrastructure.

- The capability to deploy in parallel transparent honeypots which enable the monitoring of attacks in a safe environment provides the means to dynamically adapt and improve the attack signatures which not requiring additional networks.

- The centralization of log information and the possibility of duplication and inspection of the data traffic makes possible for the orchestration to execute complex forensics operations which are the only possible solution to determine vulnerabilities in a complex multi-tier environment such as the cloud.